Why Codex Cache Looks Lower Now, and Why We Recommend WS More Strongly

Posted April 4, 2026 by XAI Technical Team ‐ 9 min read

If you have been using Codex through api.xairouter.com, you may have noticed something recently:

Some paths no longer show the same exaggerated cache hit behavior as before.

That is real, and it is intentional.

This article explains four things:

- Why the visible cache can look lower now.

- Why the old "high-cache" approach was actually changing request semantics.

- Why the current approach is more conservative but better for production.

- Why we now recommend native Responses WebSocket more strongly when your goal is extremely high cache.

OpenAI Prompt Caching 101

Before talking about XAI Router / Codex-Cloud behavior, it helps to restate a few key points from OpenAI's official Prompt Caching guide.

According to the official docs, Prompt Caching is not about "remembering the last answer." It is about:

- reusing an exact prompt prefix

- placing static content earlier so it can be cached more effectively

- placing dynamic user-specific content later so it does not break the shared prefix

- using

prompt_cache_keyconsistently so requests with common prefixes are more likely to route to machines that already hold that cache

The official guide also makes several engineering-relevant points explicit:

- For longer requests, Prompt Caching can significantly reduce both latency and input token cost.

- The best practice is to put stable content first and dynamic content last.

prompt_cache_keyshould be stable, but not too coarse; if one fixed prefix and one fixed key get too hot per minute, cache effectiveness can actually drop.- Prompt cache does not change output semantics. It caches prompt prefill, not the final answer.

- Prompt cache is not shared across organizations. Even identical prompts do not reuse cache across org boundaries.

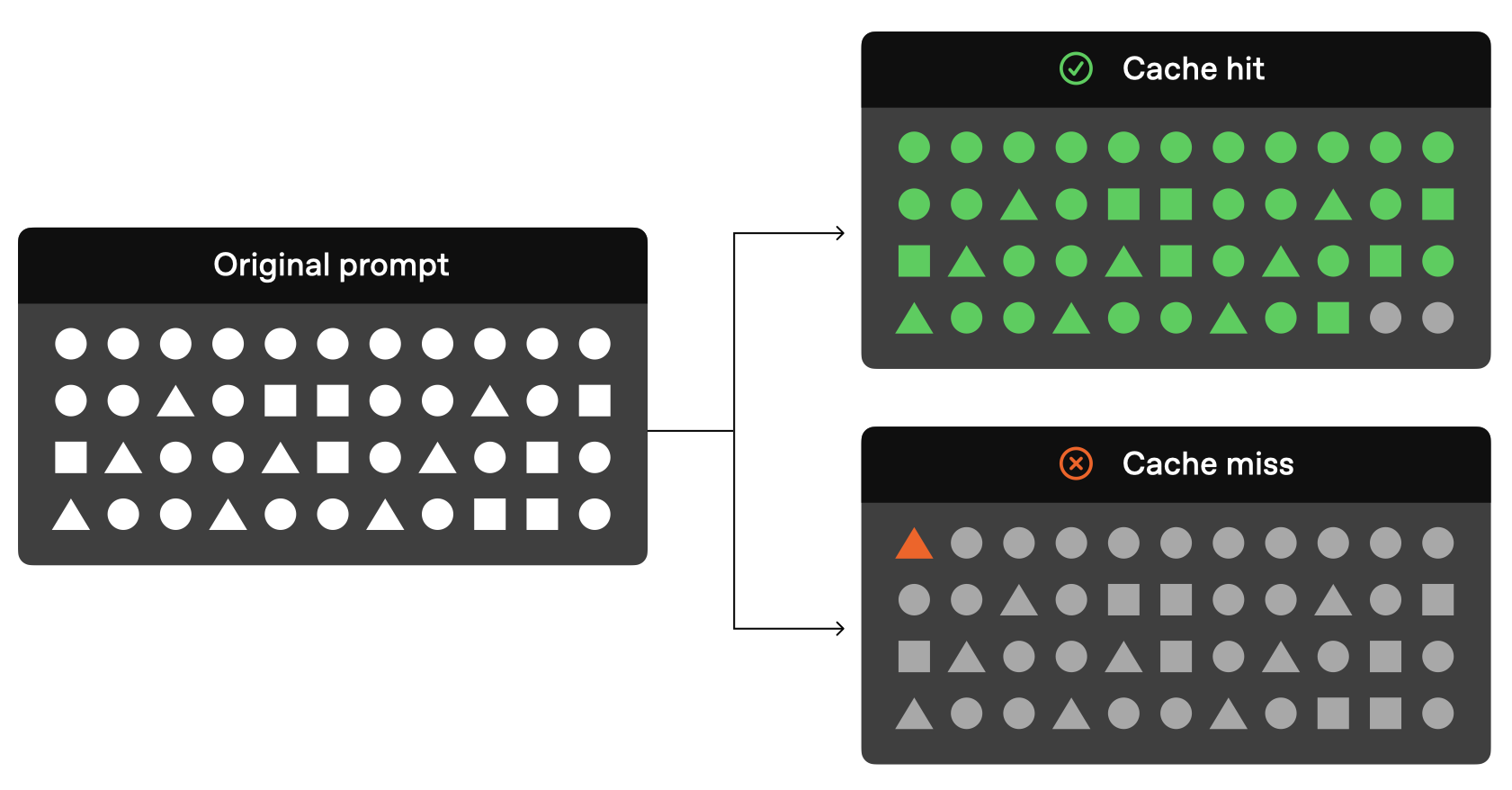

The visualization below comes from OpenAI's official Prompt Caching guide and is a useful way to think about the core mechanism: matching prefixes hit, changed prefixes miss.

Source: OpenAI Prompt Caching Guide https://developers.openai.com/api/docs/guides/prompt-caching

Once this official model is clear, the rest of the design becomes easier to understand:

- why "high cache" should not be bought by implicit continuation

- why stable prefixes and stable routing matter more

- why native Responses WebSocket is the strongest path for very high cache

The short version

We did not "turn cache off." We removed a class of dangerous implicit continuation.

Some of the old high-cache paths effectively did this:

- keep

prompt_cache_keyvery stable - then quietly upgrade it into something session-like such as

session_idorconversation_id

That could raise continuation/cache hit behavior, but it also had a clear downside:

- a new question could accidentally inherit the previous semantic context

- an HTTP request could become an implicit continuation request without the user realizing it

- the user-facing symptom was exactly what you would expect:

- "I asked this turn, but got something from the last turn"

- "This should be a new request, but it behaves like I am still in the old conversation"

So the recent change is not "cache got worse." It is that we cut this dangerous coupling:

cache key -> session identity

Why the old cache looked higher

Because it was not only benefiting from Prompt Caching.

In practice it was mixing two effects:

- real prompt cache reuse

- implicit conversational continuity

From the outside that looked great:

- repeated prefixes seemed easier to hit

- the same task seemed easier to continue

But under the hood it meant something more dangerous:

requests that should have been independent were being nudged into the same session context.

That is why we concluded:

The earliest "high-cache" approach was not pure cache optimization. It was unavoidably changing request semantics through implicit continuation.

What changed

1. WebSocket now uses explicit session semantics

On the WebSocket side, we now align to a stricter rule:

- only explicit

session_idis treated as session identity - only explicit

conversation_idis treated as conversation identity prompt_cache_keyis no longer upgraded into WS session identity- internal sticky headers are no longer propagated as upstream session identity

That means:

- WebSocket can still achieve very high cache

- but it is much less likely to blur session boundaries

2. In HTTP, prompt_cache_key is now only a cache hint

On the HTTP side, the rule is now:

prompt_cache_keystill remains in the payload- transformed paths can still derive a more stable compat

prompt_cache_key - but it is no longer silently upgraded into upstream

session_id/conversation_id

In other words:

- you can still benefit from Prompt Caching

- but the cache key is no longer confused with the session key

3. XAI Router sticky is now only local account affinity

The local sticky behavior has also been tightened:

- sticky is used only when there is a real

sessionHash - the same

sessionHashnow deterministically lands on the same upstream key/account - requests without a

sessionHashsimply keep using normal round-robin - sticky no longer leaks upstream

So local sticky now has one clear job:

keep similar requests on the same upstream account so cache locality improves.

Not:

silently turn unrelated requests into one upstream conversation.

Why cache may look lower now

Because we intentionally gave up part of the hit behavior that came from implicit continuation.

That old hit behavior looked attractive, but it had three problems:

- it was not pure

- it was not safe

- it was not easy to reason about

What dropped is mostly:

- continuation-driven apparent hit behavior

Not:

- true prompt-prefix cache capability

If your client still:

- uses a stable

prompt_cache_key - keeps stable prefixes

- prefers native Responses / WebSocket paths

then you can still achieve very high cache rates.

Why we recommend native WS more strongly now

If your goals are:

- very high cache

- low semantic contamination

- high throughput

- stronger behavior on long-running tasks

then the best answer is not "re-enable implicit continuation." It is:

use native Responses WebSocket directly.

Why:

1. WS is naturally better at explicit continuity

WebSocket is already a long-lived connection:

- one task can continue on one connection

- continuity is explicit and natural

- there is no need to pretend a cache key is a session key

2. Prefixes are naturally more stable within one WS connection

Within one native Responses WS session:

- model choice is stable

- instructions are more stable

- tool schema is more stable

- context stays concentrated

This is exactly the kind of environment Prompt Caching likes.

3. It can achieve very high cache without blurring semantics

We are not trying to reject high cache. We are trying to get high cache through the correct protocol path.

That is why WS is currently the preferred route.

Recommended baseline configuration

If you want Codex to prefer native Responses WebSocket and maximize cache in a production-friendly way, we recommend the following baseline.

Save it to:

~/.codex/config.tomlIf you already have your own Codex configuration, you can merge just the relevant fields instead of replacing the entire file.

model_provider = "xai"

model = "gpt-5.4"

model_reasoning_effort = "xhigh"

plan_mode_reasoning_effort = "xhigh"

model_reasoning_summary = "none"

model_verbosity = "medium"

model_context_window = 1050000

model_auto_compact_token_limit = 945000

tool_output_token_limit = 6000

approval_policy = "never"

sandbox_mode = "danger-full-access"

service_tier = "fast"

suppress_unstable_features_warning = true

cache_ttl = "30m"

[model_providers.xai]

name = "OpenAI"

base_url = "https://api.xairouter.com"

wire_api = "responses"

requires_openai_auth = false

env_key = "XAI_API_KEY"

supports_websockets = true

[features]

responses_websockets_v2 = true

multi_agent = true

[agents]

max_threads = 4

max_depth = 1

job_max_runtime_seconds = 1800Why this WS config helps produce extremely high cache

1. wire_api = "responses"

This keeps the path close to native Responses semantics instead of introducing extra compatibility layers.

The more native the path, the more stable the prefix tends to be.

2. supports_websockets = true

This allows the client to use native Responses WebSocket directly instead of rebuilding HTTP context turn after turn.

3. responses_websockets_v2 = true

This is the preferred Responses WebSocket mode for current Codex workflows.

Its value is not that it is "fancy." Its value is that it is:

- closer to official semantics

- more suitable for multi-turn task chains

- more stable for prompt prefix retention

4. model = "gpt-5.4"

This is currently one of the strongest baseline models we recommend for this workflow, and it is also included in automatic compat cache-key optimization.

5. Large context + high compaction threshold

model_context_window = 1050000

model_auto_compact_token_limit = 945000This combination means:

- keep the stable prefix intact for as long as possible

- compact later instead of too early

- preserve cache-friendly prefix structure during long tasks

If the threshold is too low, prefixes get compacted earlier and cache stability can drop.

6. cache_ttl = "30m"

This is a practical client-side engineering setting that pairs well with upstream Prompt Caching.

It does not replace upstream Prompt Caching. It helps keep the local workflow stable enough for upstream caching to remain effective.

What this configuration is best for

This baseline is best for:

- long-running coding sessions

- multi-turn work inside the same repository

- tasks with relatively stable instructions and tool definitions

- users who want very high cache without quietly changing session semantics

In short:

it is ideal for going deep on one task over time, not for forcing different tasks into one hidden context.

If you care more about cache than semantics

We still do not recommend going back to the old implicit continuation approach.

The reason is straightforward:

- yes, it can make cache look higher

- but it also unavoidably changes request semantics

- and it brings back the risk of "this turn answered like the last turn"

So the current recommendation is:

- if you want extremely high cache, use native Responses WS

- if you want explicit continuation, pass

session_id,conversation_id, orprevious_response_id - if you want safety and predictability, do not rely on implicit upgrades

Final takeaway

If cache looks lower recently, it is not because the system got worse.

It is because we deliberately removed the part of the old hit behavior that came from implicit continuation.

The current split is much cleaner:

prompt_cache_keyis for cachesession_id/conversation_id/previous_response_idare for explicit continuation- local sticky is for account affinity only

- native WebSocket is the strongest path for extremely high cache without changing semantics

So if your goal is:

- high cache

- high performance

- clean semantics

the answer is no longer "go back to the old implicit high-cache behavior."

The answer is:

use native Responses WebSocket with a stable Codex configuration baseline.